AI Fundamentals - What is artifical intelligence

AI Fundamentals: What Is Artificial Intelligence Really?

Welcome back to my AWS AI Practitioner journey!

Forget the sci-fi movies and the "robot overlord" tropes. As we prep for the AWS AIF-C01, we need to view AI through a professional lens: it is a set of technologies designed to solve problems we usually associate with human intelligence.

Defining Artificial Intelligence

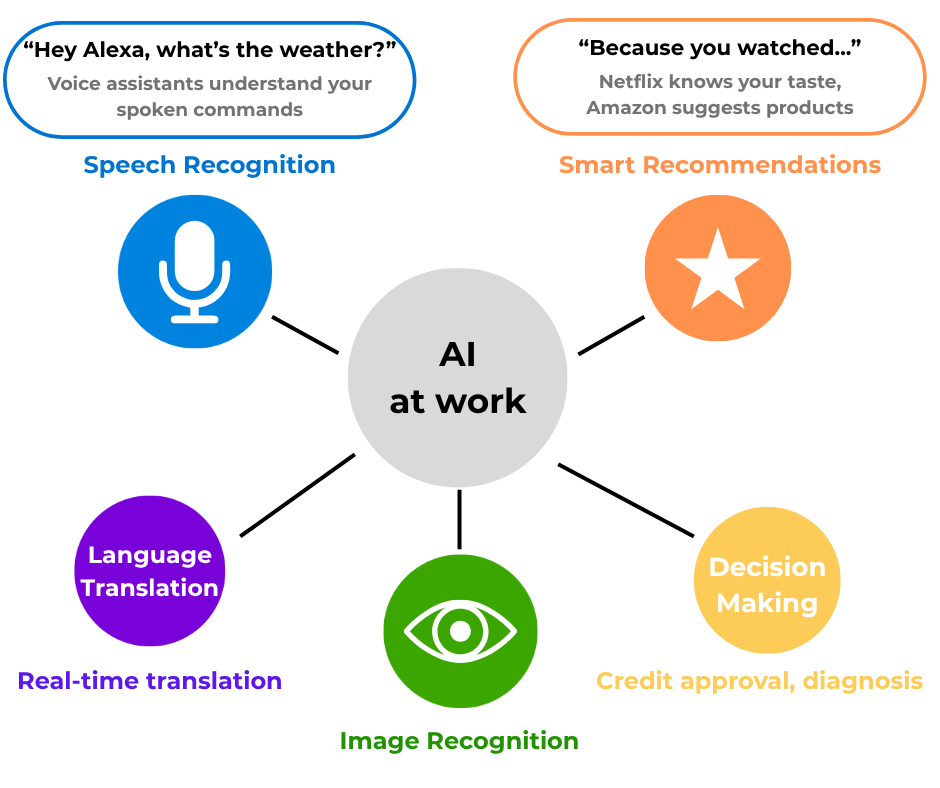

At its core, Artificial Intelligence (AI) refers to techniques that enable computers to mimic human intelligence. Think about what makes us "intelligent" - we can recognize faces, understand language, make decisions, solve problems, and learn from experience. AI is about teaching computers to do these same tasks.

Think of it like this. If a computer can do something that normally requires human knowledge - like understanding what you're saying, recognizing your cat in a photo, or recommending your next Netflix binge - that's AI in action.

The Technical Definition

More formally, AI encompasses:

Problem-solving capabilities that traditionally required human cognition

Pattern recognition in complex data

Decision-making based on inputs and learned experiences

Natural language understanding and generation

Visual perception and interpretation

A Brief History: How We Got Here

AI isn't new (the term was coined in 1956), but three things finally aligned to make it production-ready for businesses in 2026:

The Birth of AI (1940s-1950s)

1943: Warren McCulloch and Walter Pitts created a model of artificial neurons. Yes, we've been trying to mimic brains since the 1940s!

1950: Alan Turing publishes "Computing Machinery and Intelligence" and proposes the famous Turing Test. His question: "Can machines think?" Still debating this one at dinner parties.

1956: The Dartmouth Conference officially coins the term "Artificial Intelligence." A group of scientists gathered to discuss "thinking machines" - and AI was born.

Early Enthusiasm and First Winter (1960s-1970s)

The 1960s saw huge optimism. Researchers created:

ELIZA (1966): The first chatbot! It could hold conversations (sort of)

General problem solvers and theorem provers

But then reality hit. Computers were expensive, slow, and couldn't handle real-world complexity. Funding dried up in what we call the first "AI Winter."

Revival and Second Winter (1980s-1990s)

1980s: Expert systems brought AI back! These were rule-based systems that could make decisions in specific domains. Think medical diagnosis systems with thousands of "if-then" rules.

1997: IBM's Deep Blue defeats world chess champion Garry Kasparov. The world takes notice.

But again, limitations emerged. Expert systems were brittle, expensive to maintain, and couldn't learn. Cue AI Winter #2.

The Deep Learning Revolution (2000s-2010s)

This is where things get exciting:

2006: Geoffrey Hinton shows how to train deep neural networks effectively. Game changer!

2012: AlexNet wins the ImageNet competition by a huge margin using deep learning. Suddenly, computers could "see" better than ever.

2016: Google's AlphaGo defeats world Go champion Lee Sedol. Go is way more complex than chess - this was huge.

The Current Era (2020s)

We're living in AI's golden age:

2020: GPT-3 shows language models can write, code, and create 2022: ChatGPT launches and breaks the internet (figuratively) 2023: Generative AI explodes - DALL-E, Midjourney, Stable Diffusion create art 2024-2025: AI becomes embedded everywhere - from your IDE to your toaster (okay, maybe not toasters yet)

Types of AI: Let's Get Practical

When people talk about AI, they're usually referring to one of these categories:

1. Narrow AI (What We Have Now)

The AIF-C01 exam focuses almost entirely on Narrow (Weak) AI. This is what we use today:

Virtual assistants (Alexa, Siri)

Recommendation engines (Netflix, Amazon)

Facial recognition systems (Unlocking your phone, tagging on social media)

Language translators

Game-playing AI (Chess, Go)

Autonomous vehicles

Pro-tip: For the exam and the real world, remember that everything we build on AWS today is Narrow AI.

These systems are incredibly good at their specific tasks but can't generalize. Your chess AI can't suddenly start driving a car.

2. General AI (The Holy Grail)

Also called "Strong AI" or AGI (Artificial General Intelligence). This would be AI that matches human intelligence across all domains. It could learn any task, reason abstractly, and transfer knowledge between domains.

Current status: We're not there yet. Not even close. Despite what sci-fi movies suggest!

3. Super AI (The Far Future?)

Theoretical AI that surpasses human intelligence in all aspects. This is pure speculation and the stuff of philosophy debates and Hollywood blockbusters.

Real-World AI Use Cases That Actually Matter

Let's move from theory to practice. Here's where AI is making a real difference today:

Healthcare

Medical imaging: AI detects cancer in mammograms and CT scans, often better than human radiologists

Drug discovery: AI identifies potential new medicines in months instead of years

Personalized treatment: AI analyzes patient data to recommend tailored treatment plans

Mental health: AI chatbots provide 24/7 support and early intervention

Finance

Fraud detection: AI spots unusual patterns in milliseconds

Risk assessment: More accurate credit scoring and loan approvals

Algorithmic trading: AI makes split-second market decisions

Customer service: AI chatbots handle routine banking queries

Transportation

Autonomous vehicles: From Tesla's Autopilot to fully self-driving cars (coming soon™)

Traffic optimization: AI adjusts traffic lights in real-time to reduce congestion

Predictive maintenance: AI predicts when vehicles need service before they break down

Route optimization: Your Uber arrives faster thanks to AI routing

Retail and E-commerce

Personalized recommendations: "You might also like..." (and you probably will)

Inventory management: AI predicts demand and optimizes stock levels

Visual search: Snap a photo, find the product

Dynamic pricing: Prices adjust based on demand, competition, and your browsing history

Education

Personalized learning: AI adapts to each student's pace and style

Automated grading: Frees teachers to focus on teaching

Intelligent tutoring: 24/7 help for students

Predictive analytics: Identifies students at risk of dropping out

Entertainment

Content creation: AI writes scripts, composes music, creates art

Game development: NPCs with more realistic behaviors

Content recommendation: Your "For You" page knows you scary well

Special effects: AI generates realistic CGI faster and cheaper

Key AI Concepts You Need to Know

Before we dive deeper in future posts, here are the fundamental concepts that power AI:

1. Data: The Fuel of AI

In the AWS ecosystem, your model is only as smart as the data you feed it. As you prep for the AIF-C01, keep these three data laws in mind:

GIGO (Garbage In, Garbage Out): This isn't just a cliché; it's a technical bottleneck. If you train a computer vision model solely on high-resolution, perfectly lit images, it will likely fail in a real-world, low-light environment. This is exactly why data scientists spend roughly 80% of their time cleaning and "wrangling" data rather than actually building models.

The "Bias In, Bias Out" Trap: AI doesn't have an opinion—it has a pattern. If your training data contains historical bias (like the 2018 Amazon recruiting tool that favored male candidates because of past hiring trends), the AI will amplify that bias under the guise of "optimization." For the exam, remember that Responsible AI starts with auditing your training sets for these mirrors of past human error.

Quality Over Volume: Don't get distracted by "Big Data" hype. Ten thousand high-fidelity, accurately labeled data points are infinitely more valuable than a million rows of "dirty" or mislabeled noise. In the world of AWS SageMaker, efficiency beats raw scale every time.

2. Algorithms: The Logic Frameworks

Allgorithms are the mathematical recipes that turn raw data into actionable patterns. For the AWS AI Practitioner exam, you need to distinguish between three primary learning styles:

Supervised Learning (The "Labeled" Approach): This is the most common path for business problems. You provide the algorithm with a dataset where the "answers" are already known—like 10,000 emails already marked as "Spam" or "Inbox." The model learns the characteristics of each and applies that logic to new data.

AWS Use Case: Using Amazon Comprehend to classify support tickets based on historical tags.

Unsupervised Learning (Pattern Discovery): Here, the data has no labels. The algorithm's job is to find hidden structures or clusters on its own. It’s perfect for when you don't know what you’re looking for yet.

AWS Use Case: Using Amazon Personalize to find "segments" of users who have similar buying habits without manually defining those groups.

Reinforcement Learning (Trial and Error): This is about optimizing a path toward a specific reward. The agent takes an action, receives feedback (a "reward" or a "penalty"), and adjusts its strategy to maximize the score.

AWS Use Case: AWS DeepRacer, where a virtual car learns to navigate a track by being "rewarded" for staying on the line and "penalized" for going off-track.

3. Compute: The "Muscle" Behind the Math

Raw logic isn't enough; AI requires massive computational scale to process billions of parameters. In the AWS world, this is about moving from "General Purpose" to "Purpose-Built" hardware.

GPU vs. CPU: A CPU is great for sequential tasks, but AI training requires massive parallelism. GPUs (and increasingly TPUs) allow the system to perform thousands of simple calculations simultaneously.

AWS Silicon (Trainium & Inferentia): While NVIDIA is the household name, AWS built its own chips—Trainium for training models and Inferentia for running them (inference). This is a key exam point: using specialized hardware can significantly cut your cloud bill.

The Cloud Advantage: We’ve moved from a CapEx model (buying expensive server racks) to an OpEx model. You can rent a cluster of P4d instances to train a model over a weekend and shut them down on Monday. That "elasticity" is what made the current AI boom possible for startups, not just tech giants.

4. Feedback Loops: The Continuous Improvement Cycle

Traditional software is static; it does exactly what the code says until a dev pushes a patch. AI is dynamic—it relies on feedback loops to remain accurate.

Training & Validation: Before a model goes live, it’s "tested" against a validation set. This ensures the model didn't just memorize the training data (overfitting) but actually understood the underlying patterns.

Production Monitoring: Once a model is in the wild, it needs a "watchdog." If the real-world data starts looking different from the training data (a phenomenon called Model Drift), the performance will tank.

User Signals: Every time a user corrects a chatbot or marks an email as "not spam," that data is fed back into the next training cycle. This creates a "flywheel effect" where the system gets objectively better the more it is used.

The AI Landscape Today

As we prepare for the AWS AI Practitioner exam, it's crucial to understand the current AI ecosystem:

Major Players

Tech Giants: Google, Amazon, Microsoft, Meta, Apple

AI-First Companies: OpenAI, Anthropic, Stability AI

Hardware Leaders: NVIDIA (those precious GPUs!), AMD, Intel

Cloud Providers: AWS, Azure, Google Cloud (where AI happens at scale)

Hot Trends

Generative AI: Creating new content (text, images, code, music)

Large Language Models: GPT-4, Claude, Gemini understanding and generating human language

Multimodal AI: Systems that work with text, images, and audio together

AI Ethics: Ensuring AI is fair, transparent, and beneficial

Edge AI: Running AI directly on devices for privacy and speed

Why This Matters for AWS AI Practitioner

Understanding these fundamentals is crucial because:

AWS builds on these concepts: Every AWS AI service implements these principles

Better decision-making: You'll know which AWS service fits your use case

Cost optimization: Understanding AI helps you choose efficient solutions

Real-world application: You'll bridge theory and practice effectively

Common Misconceptions About AI

Let's bust some myths:

❌ "AI will replace all jobs"

Reality: AI augments human capabilities. It's changing jobs, not eliminating them entirely. New roles are emerging (prompt engineer, anyone?).

❌ "AI is sentient/conscious"

Reality: Current AI is pattern matching at scale. It's not self-aware, despite convincing conversations.

❌ "AI is always right"

Reality: AI makes mistakes, can be biased, and has limitations. Always verify critical decisions.

❌ "AI is too complex for non-techies"

Reality: Modern AI tools are becoming user-friendly. You don't need a PhD to use AI effectively.

Your AI Journey Checklist

As you embark on learning AI for AWS, remember:

[ ] AI is about solving human-like problems with computers

[ ] We're in the era of Narrow AI - powerful but specialized

[ ] Data quality is crucial for AI success

[ ] AI is already transforming every industry

[ ] Understanding fundamentals helps you use AWS AI services better

What's Next?

Now that we understand what AI is and where it came from, we're ready to dive deeper. In our next post, we'll explore Machine Learning - the engine that powers modern AI. We'll look at how computers actually learn from data and the different approaches they use.

Get ready to understand:

How machines learn without explicit programming

The difference between supervised, unsupervised, and reinforcement learning

Why your Netflix recommendations are so eerily accurate

How to think about ML in the context of AWS services

Key Takeaways

AI is about mimicking human intelligence - not creating sentient beings

We've been at this for 70+ years - overnight success takes decades

Current AI is narrow but powerful - excellent at specific tasks

AI is already everywhere - from healthcare to entertainment

Understanding AI helps you leverage AWS - better decisions, better solutions

Remember, we're all learning together. AI might seem complex, but at its heart, it's about teaching computers to be helpful in human-like ways. And with AWS making these tools accessible, we're all capable of building AI-powered solutions.

Ready to continue the journey? Let's demystify AI together, one concept at a time!

Study Resources: